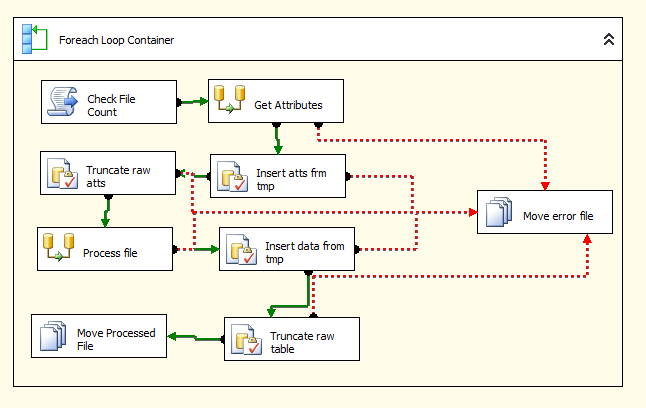

The 2012 version of SQL Server has brought with it a number of new features, not least the new, Visual Studio 2010 shell-based Management Studio. However, the changes are more than just aesthetic, as I found out when I came to try and upgrade some SQL Server Integration Studio (SSIS) packages from SQL server 2008 to 2012. Most packages upgraded fine, but there were some issues when I tried to upgrade any packages that used Script Tasks containing references to external DLLs.

read more